Agents write the workflow.

The extension makes it visible.

Why the extension exists

Turn exploration into a workflow you can keep.

The extension turns agent-written verification into something you can inspect like normal engineering work: code on the left, evidence beside it, debugger when needed. `explore/` is where exploratory API work can replace Postman without fragmenting the stack or trapping drafts in a second tool.

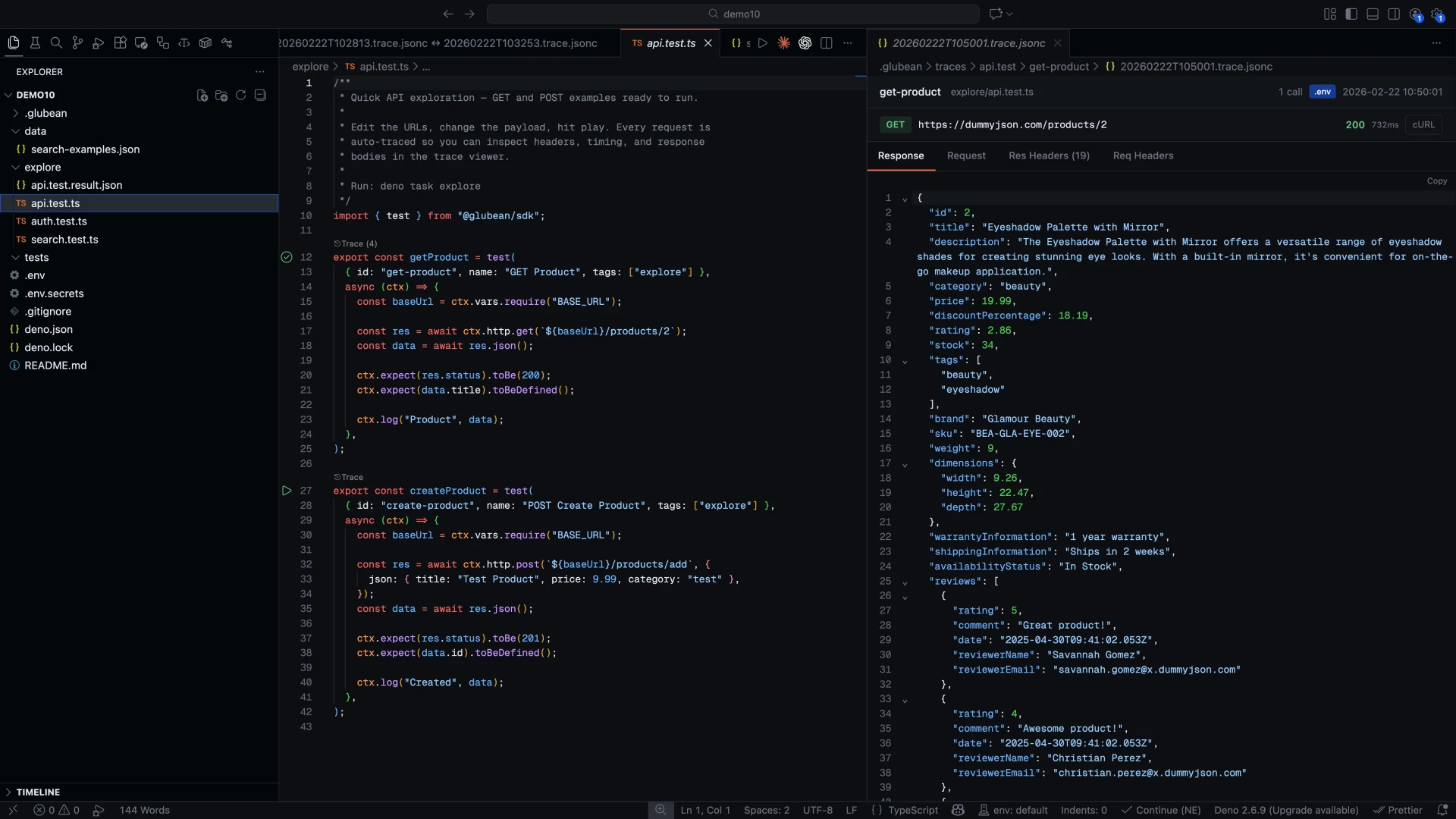

Run the draft inline

Open the workflow your agent wrote and run it from the gutter without leaving the editor.

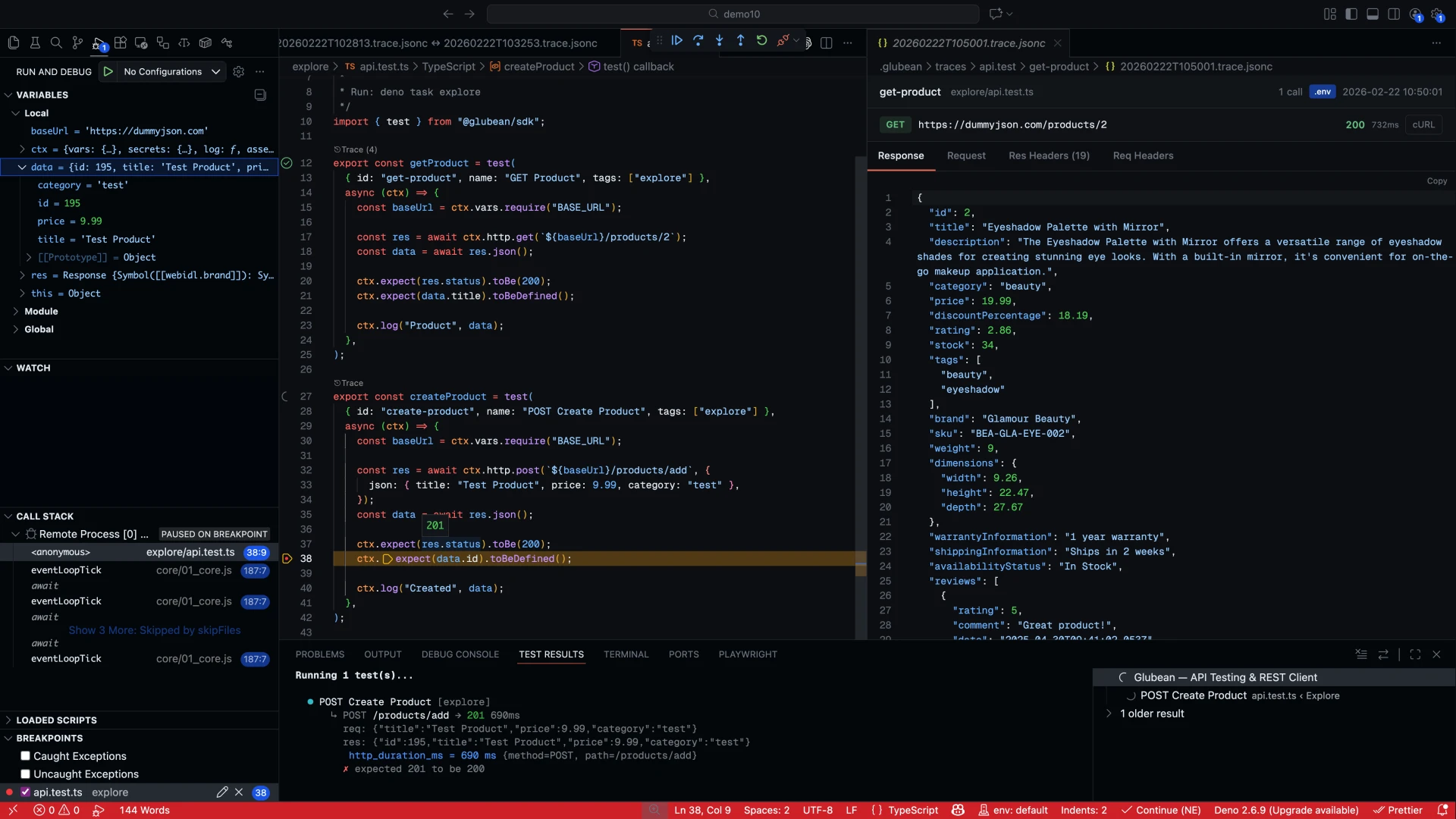

Debug real TypeScript

Set breakpoints, inspect variables, and step through the code instead of guessing from terminal output.

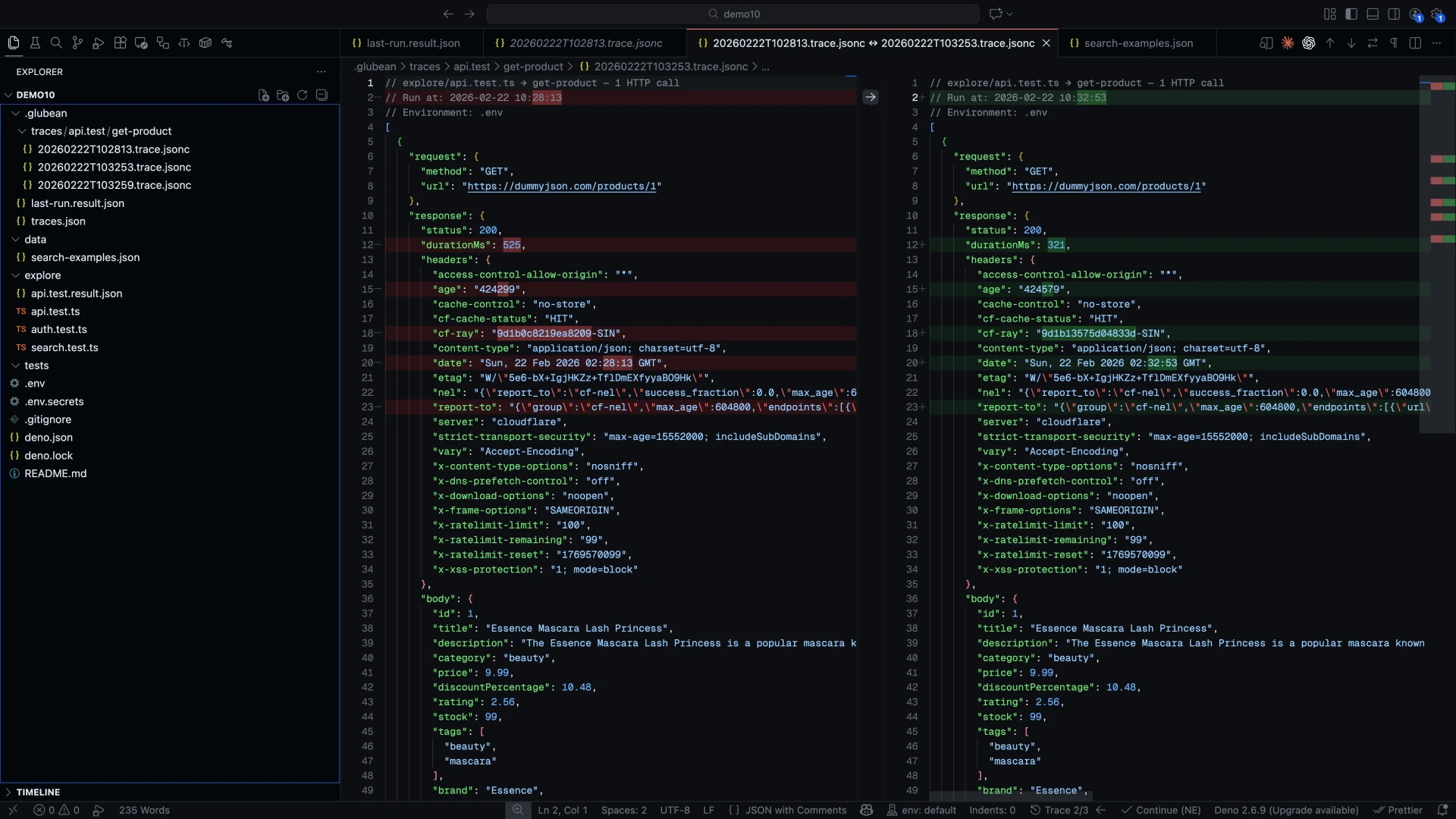

Inspect traces and diffs

Compare runs, inspect evidence, and decide whether the workflow is trustworthy enough to keep.

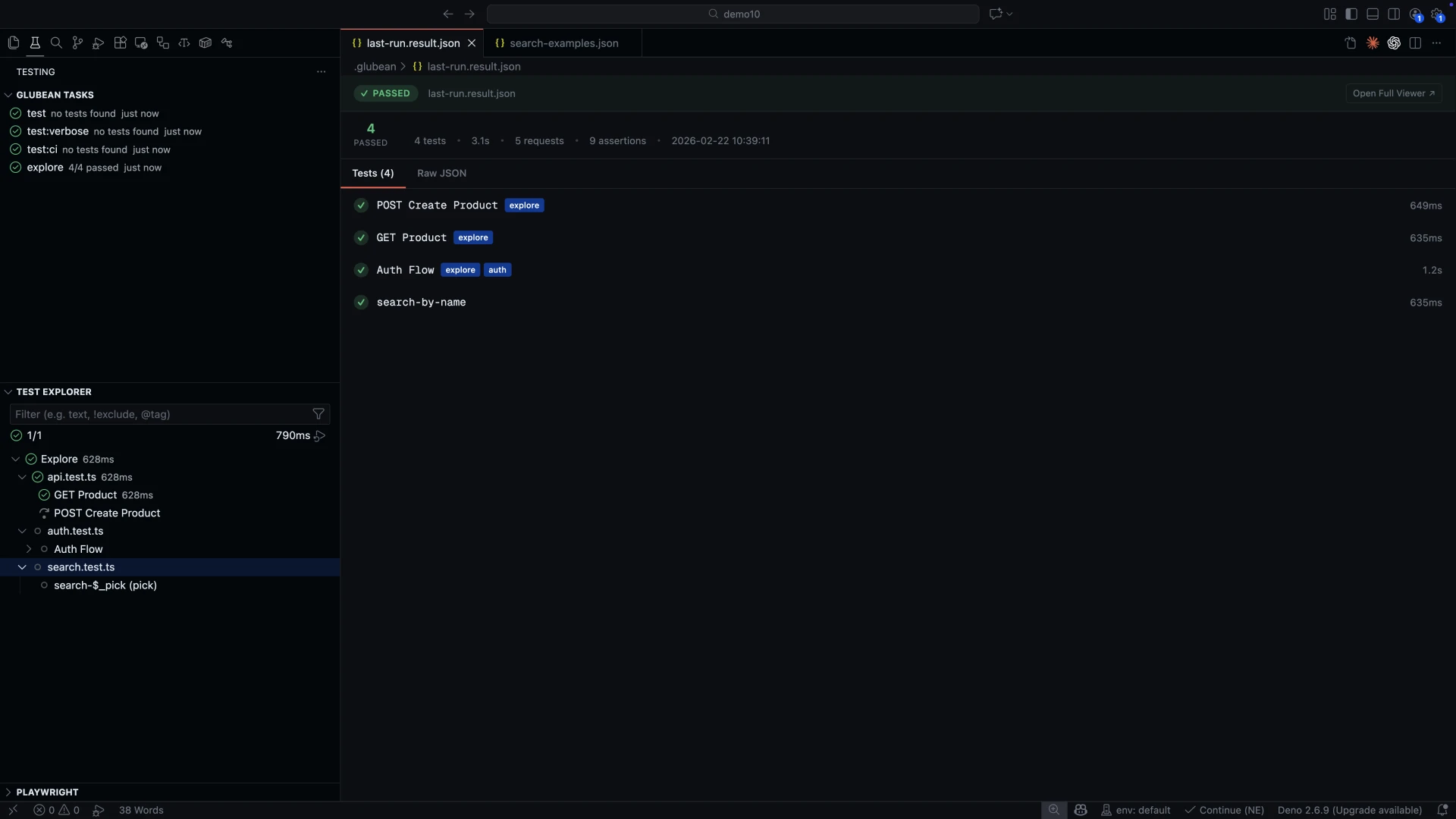

Promote the same file

A workflow can start in `explore/` as a Postman replacement, then become the same file you commit, review, and promote to CI.

End to end

From `explore/` draft to trusted workflow.

The extension is where you validate what the agent produced and decide whether the draft earns a place in the repo.

Prompt and context

“Verify checkout with promo code, tax calculation, and webhook delivery.”

Context fed into generation

Agent draft

import { test } from "@glubean/sdk";

export const listUserRepos = test(

{ id: "github-list-repos", tags: ["explore"] },

async (ctx) => {

const username = ctx.vars.require("GITHUB_USERNAME");

const token = ctx.vars.get("GITHUB_TOKEN");

const headers: Record<string, string> = {

Accept: "application/vnd.github+json",

};

if (token) {

headers["Authorization"] = `Bearer ${token}`;

}

const data = await ctx.http

.get(`https://api.github.com/users/${username}/repos`)

.json();

ctx.expect(data.length).toBeGreaterThan(0);

ctx.log("Repos", data.slice(0, 3));

},

);What the agent did

Used your OpenAPI routes

Matched auth, cart, promo, and checkout paths from the project spec.

Generated SDK workflow code

Named steps and typed assertions, not free-form fetch calls.

Followed project conventions

Respected existing config helpers and file placement.

Ready to run immediately

Click play to see traces and verify the draft before committing it.

Starts where Postman used to live

Exploratory API work can begin in explore/, then graduate into durable tests without changing tools.

Prompt

Context goes in

Code

TypeScript comes out

Trace

Evidence closes the loop

Why the draft stays grounded

Agent output still needs workflow structure.

The extension feels good because the draft is not generic. Specs, skills, SDK surfaces, and MCP tools keep the workflow tied to your project instead of drifting into demo code.

Targets the SDK, not raw scripts

Agent-written code uses Glubean workflow primitives — named steps, typed assertions, shared config — so the output looks like code your team wrote.

Learn moreOpenAPI context stays focused

Annotated specs and reduced context keep the agent focused on the routes, constraints, and response shapes that matter.

Learn moreSkills lock what matters

A skill file teaches the agent your exact SDK API, auth patterns, assertion style, and conventions so drafts follow your team's patterns from the start.

Learn moreMCP closes the loop

MCP tools let the agent run the workflow, read structured failures, fix the code, and rerun without dropping back to pasted logs.

Learn moreInside the extension

Run, inspect, debug, diff. Stay in one window.

Once the agent writes the draft, the extension keeps the feedback loop tight: run it, check the trace, fix what needs fixing, and move on without leaving the editor.

Inline play buttons

Run one workflow, one file, or the whole workspace directly from the editor gutter.

Breakpoint debugging

Step through real code and inspect variables instead of guessing from logs.

Trace diff

Compare two runs with native diff to see exactly what changed in the workflow.

Schema and contract checks

Use built-in validation patterns so generated code has real assertions behind it.

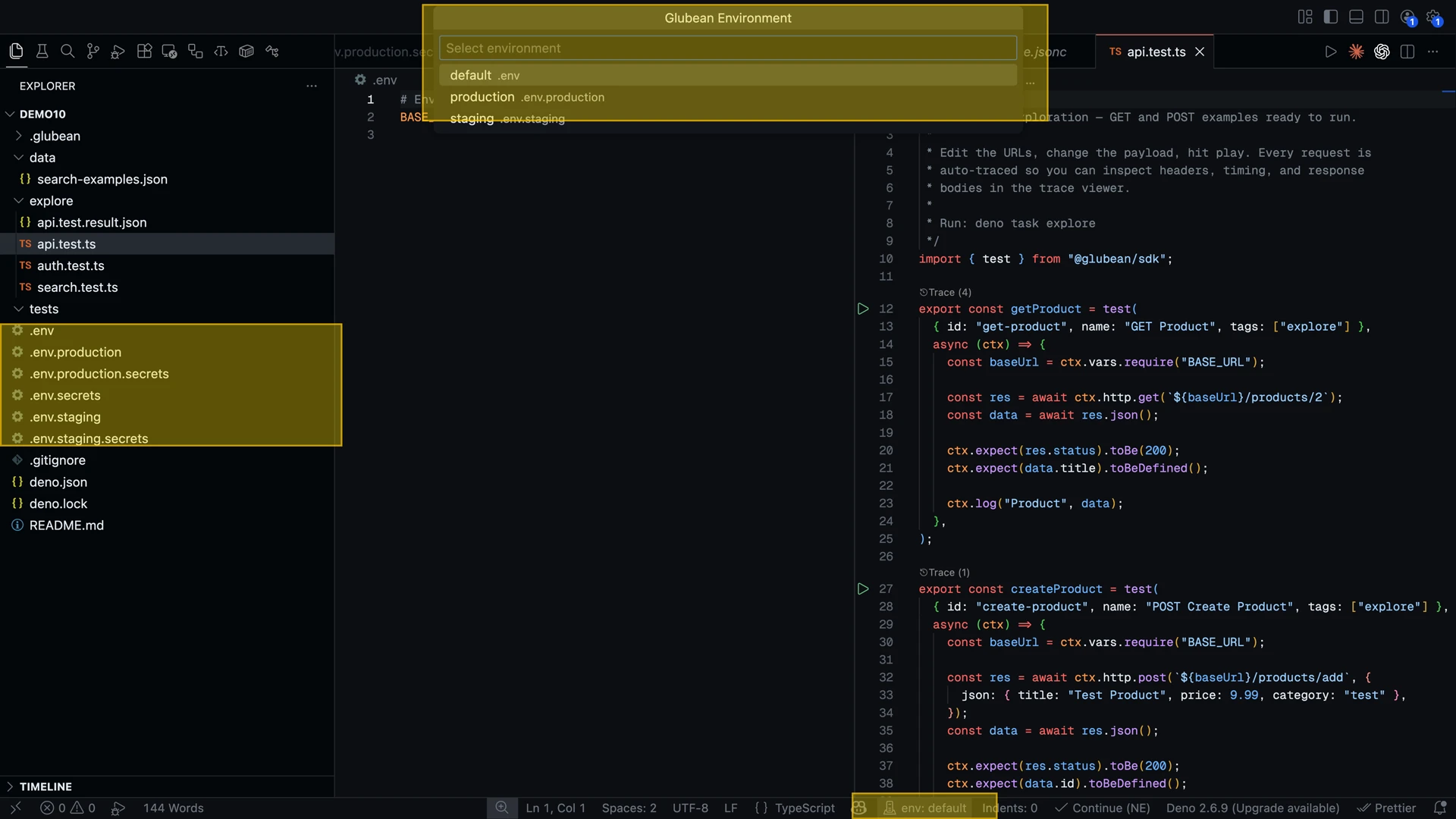

Environment switching

Move across local, staging, and production context from the status bar without rebuilding the test.

Git-native files

Everything stays in TypeScript, so PR review, branching, and refactoring work like normal.